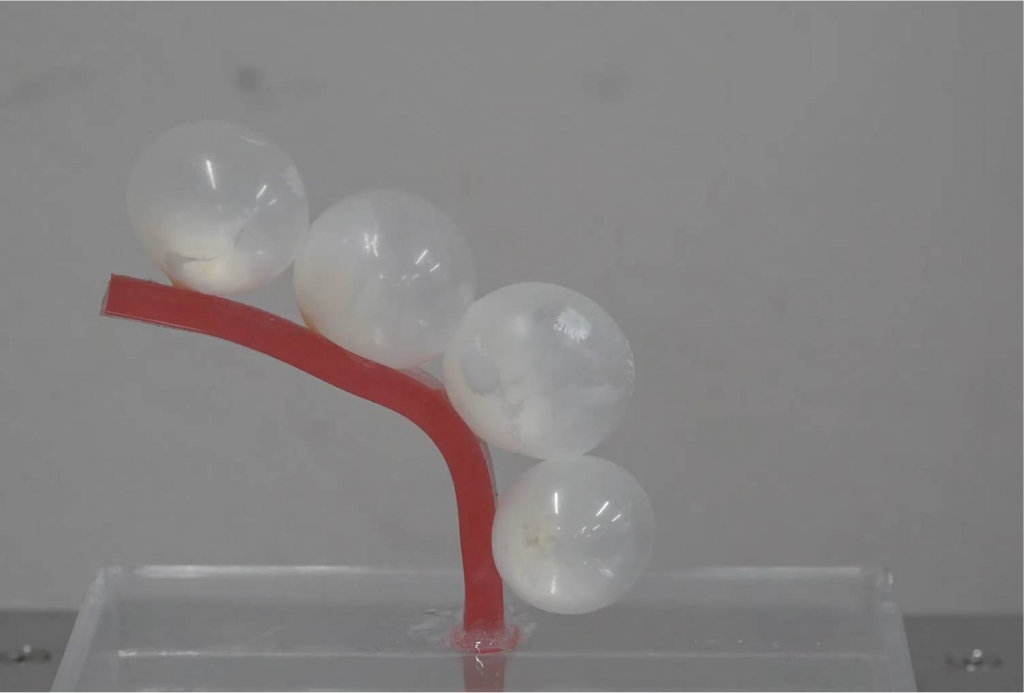

Micro Elastic Pouch Motors: Elastically Deformable and Miniaturized Soft Actuators Using Liquid-to-Gas Phase Change

(IEEE RA-L 2021, IEEE RoboSoft 2021)

We proposed Micro Elastic Pouch Motors, a largely deformable and miniaturized soft actuator that is made by an elastic rubber pouch with a low-boiling-point liquid. When the temperature of the low-boiling-point liquid reaches 34 ˚C, the liquid inside the pouch evaporates, and the whole structure inflates. Thanks to the proposed fabrication method, we can make a miniaturized pouch of approximately 5 mm in diameter with a thin rubber membrane, and the pouch can inflate to a volume of 86 times or more compared to its initial volume and can generate approximately 20 N at maximum.

CoVR: Co-located Virtual Reality Experience Sharing forFacilitating Joint Attention via Projected User-Perspective View

(ACM SIGGRAPH Asia 2020 ETech)

VR experience sharing between users wearing head-mounted displays (HMD users) and users not wearing HMDs (Non-HMD users) is a promising approach to bridge the experience gap between these users. We proposed “CoVR,” a co-located VR sharing system for the HMD and Non-HMD users via projected user-perspective images, using an HMD device with a head-mounted focus-free projector. We introduce a design methodology of displaying images considering the viewpoint and the perspective of images presented to the HMD and Non-HMD users with additional information to the images and demonstrate applications.